What place has AI in our metacognitive classroom?

The past few weeks have been ablaze with news about AI and specifically ChatGPT; mainly its potential to become a homework shortcut or useful tool for teachers. One school, Alleyn’s in Dulwich, has moved to a flip learning model as access to ChatGPT presents easy opportunities for students to use it to generate their homework. Teachers will now set students tasks to research and prepare for their next lesson instead of sitting tests or writing essays. However, is this just another example of the forward march of algorithms and other AI technologies without anyone asking about the ethical or moral implications?

Much of the conversation is negative and fearful, perhaps accurately as the presence of algorithms occurs in every aspect of our life. The steady progress of artificial intelligence is ready to take our human hands from the wheel and continue driving. Thankfully, there are also many positives, for example the ability for a robot to spot cancers or sorting through and ordering a multitude of data.

However, that does leave some big questions unanswered: What place for humankind? What is so unique about humanity? Can adoption of some AI dehumanise the world? A recent article in The Sunday Times Magazine focused on the newly inaugurated Oxford Institute for Ethics in AI, tackling some of the questions. Established by a donation from Stephen Schwarzman of £175 million. Some might suggest it was to balance his donation of $350 million to MIT to fund their study of computer sciences and demonstrates a desire to examine the rapid growth of AI.

They are calling for the need for more philosophers and historians to lead a cultural revolution towards AI and thereby bring in smarter regulation. “Humanities are in a precarious condition, philosophy is in a precarious condition,” says John Tasioulas, the Director of the new institute. “But they are going to be critical for the 21st century. To build AI we need engineers, but to interpret it, to decide how it should be applied and what kind of world we want to live in requires philosophers, historians and lawyers.”

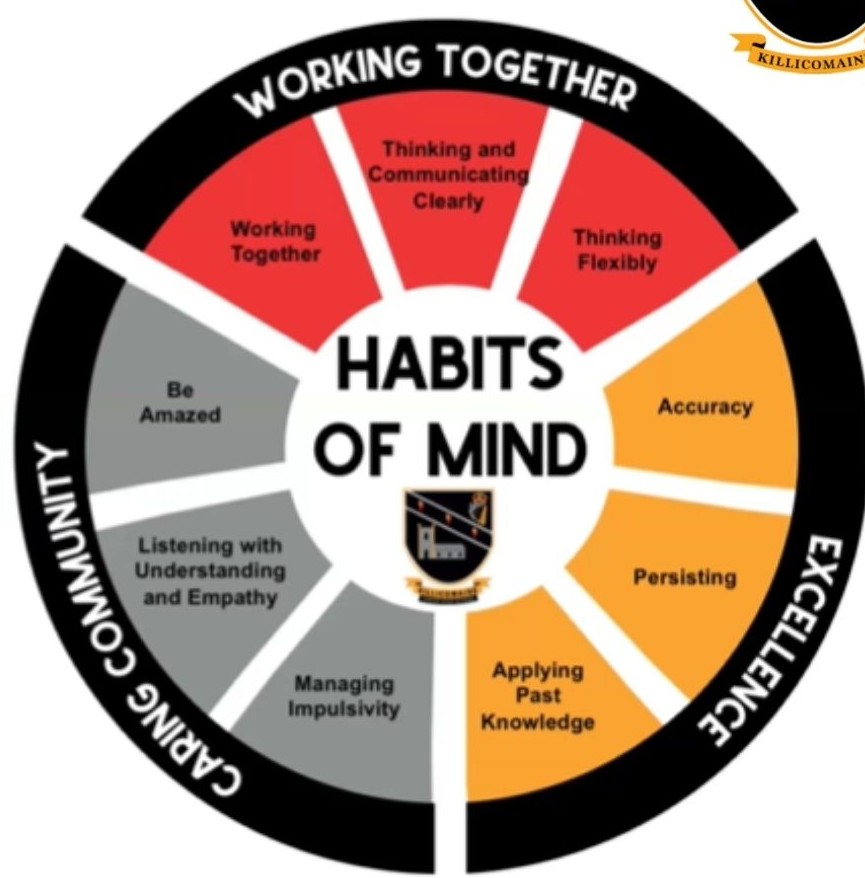

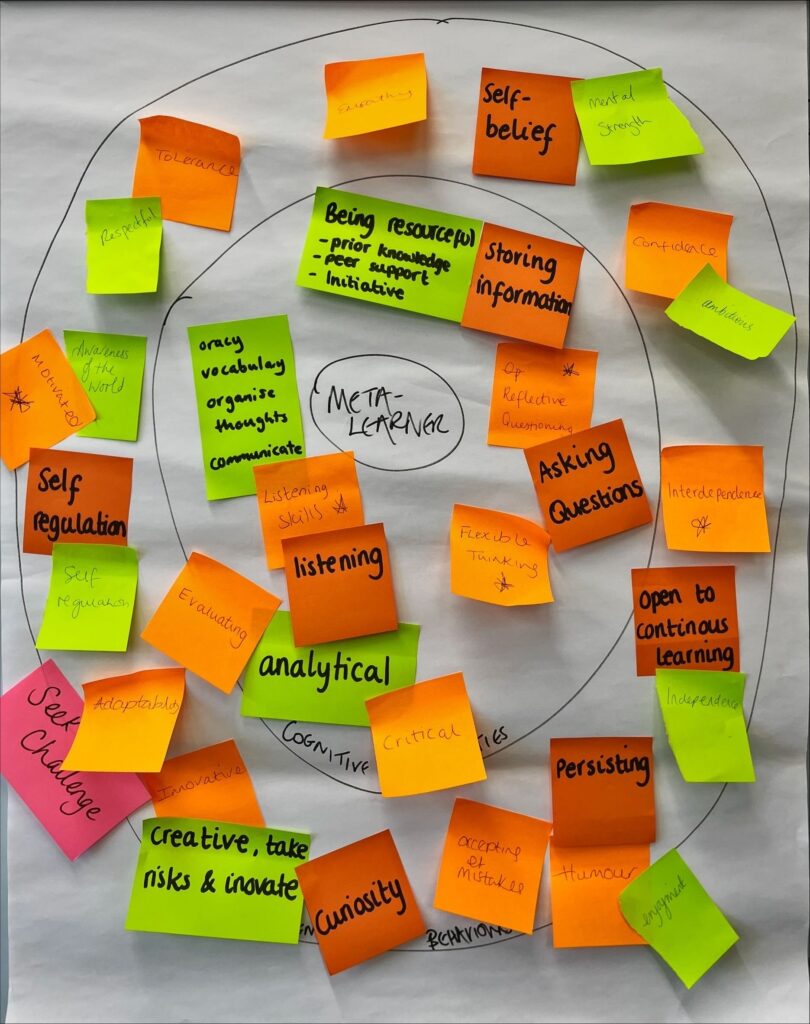

The need for humans that can interpret new technology and ask those critical questions really resonated as I read the article. Developing a student as an independent self-aware thinker with a love of learning is the mission of many Thinking Schools. As each school embarks on their journey to become a ‘Thinking School’ our starting point (after looking at why metacognition) is considering how we best prepare pupils that are going to be living into the 22nd century. With progress and change so prevalent, it is hard to pin down and map the jobs our students will undertake. Instead, we need to take a step back and think about the skills and attitudes required. Each Drive Team sets their target through identifying the Cognitive Capabilities and Intelligent Learning Behaviours required for their meta-learners to thrive at school and whatever life looks like beyond that stage.

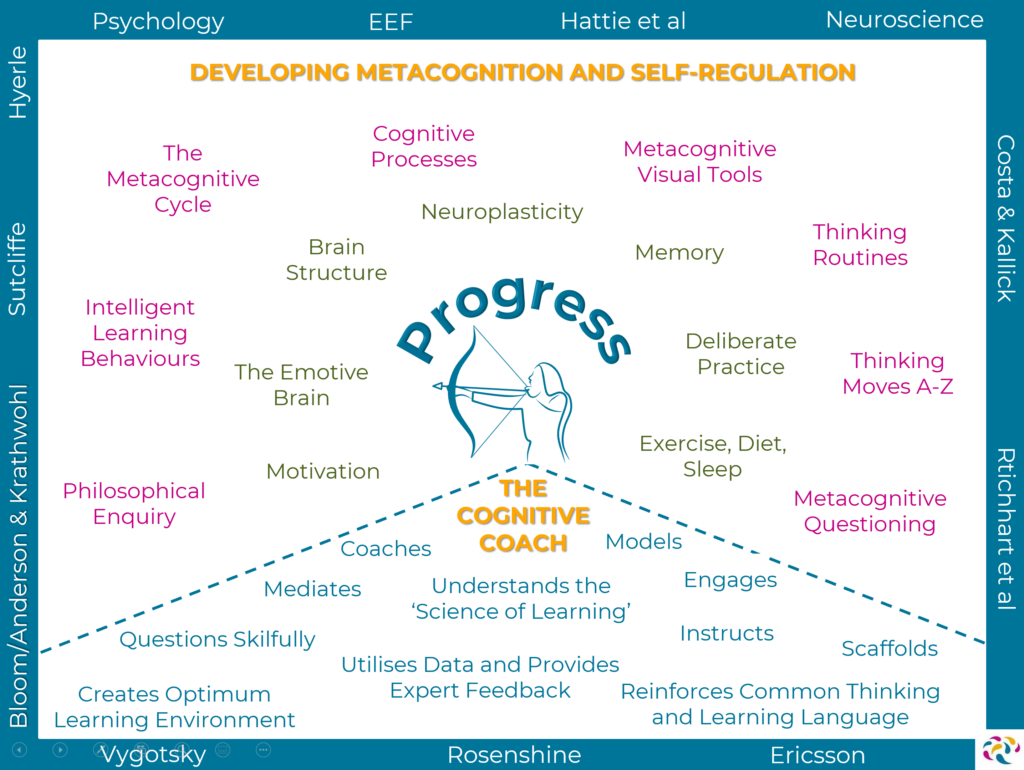

A meta-learner, armed with tools and strategies, delights in answering questions such as “What does the back of a rainbow look like?”. These don’t necessarily have a correct answer but provide space for reasoning and reflection. Additionally, they can apply logic when asked, “what angles does the hour hand of a clock rotate between 1am and 8am?”. This definitely does have a correct answer and allows the meta-learner a chance to apply their previous knowledge and skills. In our classrooms, we can use much of the open source technology to generate questions on a particular topic and assess a range of knowledge. However, it is not monitoring the progress towards the goal by targeting areas of weakness or giving purposeful feedback. A metacognitive approach is about ‘Zooming out’ and having an understanding of the wider context. It requires subtly to identify which tools or strategies are appropriate to this specific context. The Thinking Matters’ Big Picture (below) brings clarity and direction about how to approach the EEF’s guidance and recommendations in order to prepare our learners for the unknown future.

At the moment, generative AI is very much in its infancy, but we still have to pay attention. AI is not something that we can ignore and there are plenty of excellent blogs for teachers about ways to use some of the new open source elements to best effect. Thankfully, metacognition and self-regulation is even more important in this climate. Our students need to be equipped with an even deeper understanding of the Science of Learning so that they can optimise the potential of their brain and begin to ponder what we sacrifice in adoption of certain technologies.

If you are interested in finding out more about Thinking Matters’ Core Approach then please book a meeting: Calendly/Metacognition-Meeting

———

Glossary: ChatGPT describes itself as an AI assistant that can help out with various things such as providing information, answering questions, and more. It is not human, but it is designed to communicate as if it is a human being.

Want to read some more? https://www.tes.com/magazine/teaching-learning/secondary/chatgpt-could-ai-bot-be-writing-students-homework